When we wrote three weeks ago about how professional institutes should choose the first programme to digitise, and last week about what "doing it properly" actually means as a piece of digital design, we expected the conversations back to be about strategy. Most of them weren't. They were about execution. Specifically, this question, in various phrasings: "We know which programme. We've agreed what good looks like. The board has approved it. Now what?"

It is the question we hear most consistently from institute teams who have done the right thinking, made the right decision, and then run into the gap between approval and launch. That gap is where most institute digitisation projects quietly stall. Twelve months pass, the programme is still "in development," nobody can quite say why, and a soft institutional fatigue settles in. The board moves on. The next round of approvals gets harder.

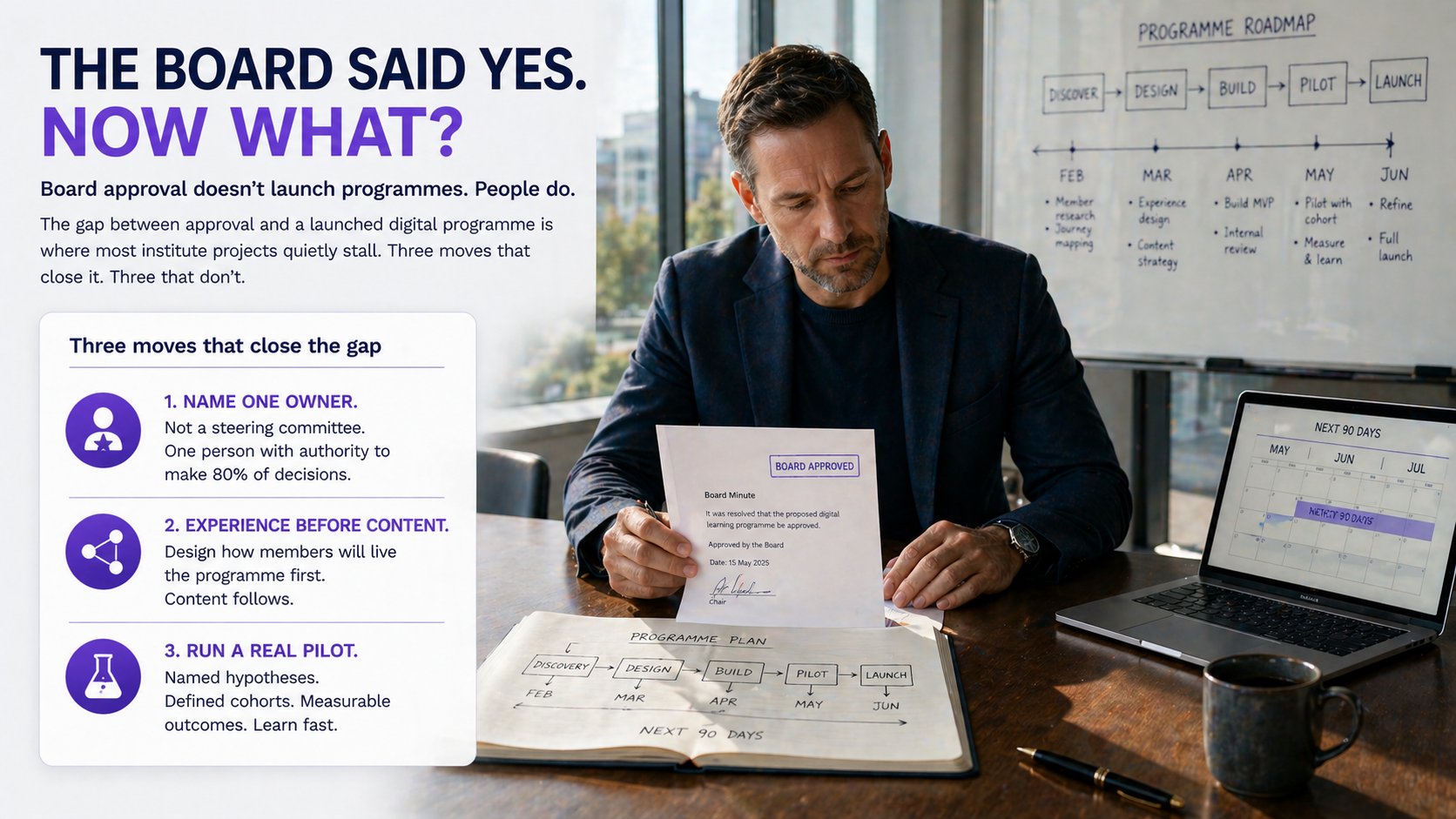

Closing that gap is not a question of more strategy. It is a question of three specific moves at the start of execution, and three categories of mistake that consistently eat the months between approval and launch.

Three moves that get a programme from approval to launch

One: name a single accountable owner. Not a steering committee. Not a working group. One person whose name is on the programme, whose schedule includes it as a primary commitment, and whose authority extends to making roughly eighty per cent of the decisions involved without escalating. Steering committees are useful for governance — for the strategic decisions and for the risk picture. They are poor delivery vehicles. Programmes run by committees are programmes that miss eighteen-month launch windows because the next decision is always two weeks away.

The owner does not have to be senior. They have to be capable, available, and visibly trusted by the executive who approved the work. Some of the strongest digital programmes we have seen at institutes were delivered by a head of programme or a senior academic lead rather than by a director — because that person had genuine authority, day-to-day availability, and a personal stake in landing it well. The senior sponsor remained accountable upward; the owner ran the work.

Two: design the cohort experience before the content. This is the move institutes most often get backwards, because content production is the biggest cost line and the most visible activity, so it is the work that feels most urgent. It is not. The right first deliverable is a clear, concrete picture of how a member will experience the programme — the rhythm of the weeks, the cadence of touchpoints, the cohort moments, the assessment journey, the rituals that mark the start and the end. Get that right and the content production decisions follow naturally, because every video, every reading, every reflection prompt is being produced for a moment in the experience, not as a free-standing artefact.

Get it wrong — start with content production before the experience design is settled — and the institute ends up with a large library of well-made material that does not fit together. Members feel the incoherence even if they cannot name it. The second cohort drops off. The data does not look great. The instinct, often, is to produce more content. It does not help.

Three: run a real pilot, not a soft launch. The distinction matters. A pilot is a deliberate experiment with named hypotheses, success metrics, a defined cohort size, and a clear stop/go decision at the end. A soft launch is "let us put something live with a small group and see how it goes." Soft launches produce ambiguous outcomes, and ambiguous outcomes produce slow second decisions. Pilots produce data — good or bad — and data produces the courage to commit to the full launch, or the courage to fix the specific thing that did not work.

The institutes that get this right typically run a twenty-five to fifty person pilot cohort, instrument it properly, set out in advance what success and failure look like, and let the data make the case for the full launch rather than internal politics. The institutes that do not, end up with a programme that nobody on the executive team has full confidence in, because nobody has clean evidence that it works.

Three categories of mistake that eat the months

The technology-first decision. Choosing the platform before the programme is designed is the single most expensive mistake in institute digitisation. Every platform has a centre of gravity — the design choices it makes naturally and the ones it makes painfully. Picking the platform first means designing the programme around the tool's preferences rather than around the members' needs. Pick the programme experience first; let the platform decision follow from what the experience requires. You will end up with a smaller, simpler platform decision and a much better programme.

The internal capability illusion. Most institute training teams are excellent at running classroom programmes, and most have very limited experience designing for digital. There is no shame in that — it is a genuinely different discipline. The mistake is in assuming the gap is small enough to bridge during the project. It rarely is. Bring in the digital design capability early — internally if it exists, externally if it does not — and accept that it is a specialism, not a skill set the team will pick up by doing.

A series of small launches that never builds into momentum. This is the slowest of the three failures and often the hardest to spot from inside. The programme launches quietly. A second programme follows six months later. A third another eight months after that. Each one is fine in isolation. None is positioned as a meaningful institutional move. Members do not register that something is changing; the executive team does not have a story to tell about transformation; the board stops asking. The institutes that build momentum do so by treating the first launch as a deliberate event — visible, framed, accompanied by a clear narrative about what comes next. Quiet launches are easy to live with and easy to forget.

The simple framing

The board approved the work because they trusted that someone in the room would make it happen. The next ninety days are about being that person — and if you are leading the institute, about making sure that person is named, supported, and given the authority to do the job properly.

Programme selection and design discipline matter enormously. We have written about both. But neither survives bad execution, and both compound when execution is good. The institutes whose digital portfolios will look strong in two years are the ones who, in the next ninety days, name an owner, design the experience first, and run a real pilot.

Where we come in

At LearnFrame, this is the work we do alongside the institutes and membership bodies we partner with. Three decades of digital learning experience, a senior strategic team in Dublin, and a development capability that delivers enterprise-quality work at a fraction of typical agency cost — a structural advantage we have built deliberately, not a compromise on quality.

Our Programme Design Audit and Board Briefing — both free and ungated on the resources page — are the right place to start if you have not yet seen them. If you have, and you are now in the gap between board approval and execution, we would welcome a conversation about what the next ninety days look like.