Last week we wrote about how professional institutes should pick the first programme to digitise — the four signals to look for, the three categories to avoid. The piece kept circling back to a single instruction: pick the right programme, then build it properly.

That word did a lot of work in the article, and we deliberately did not unpack it. This week, we will.

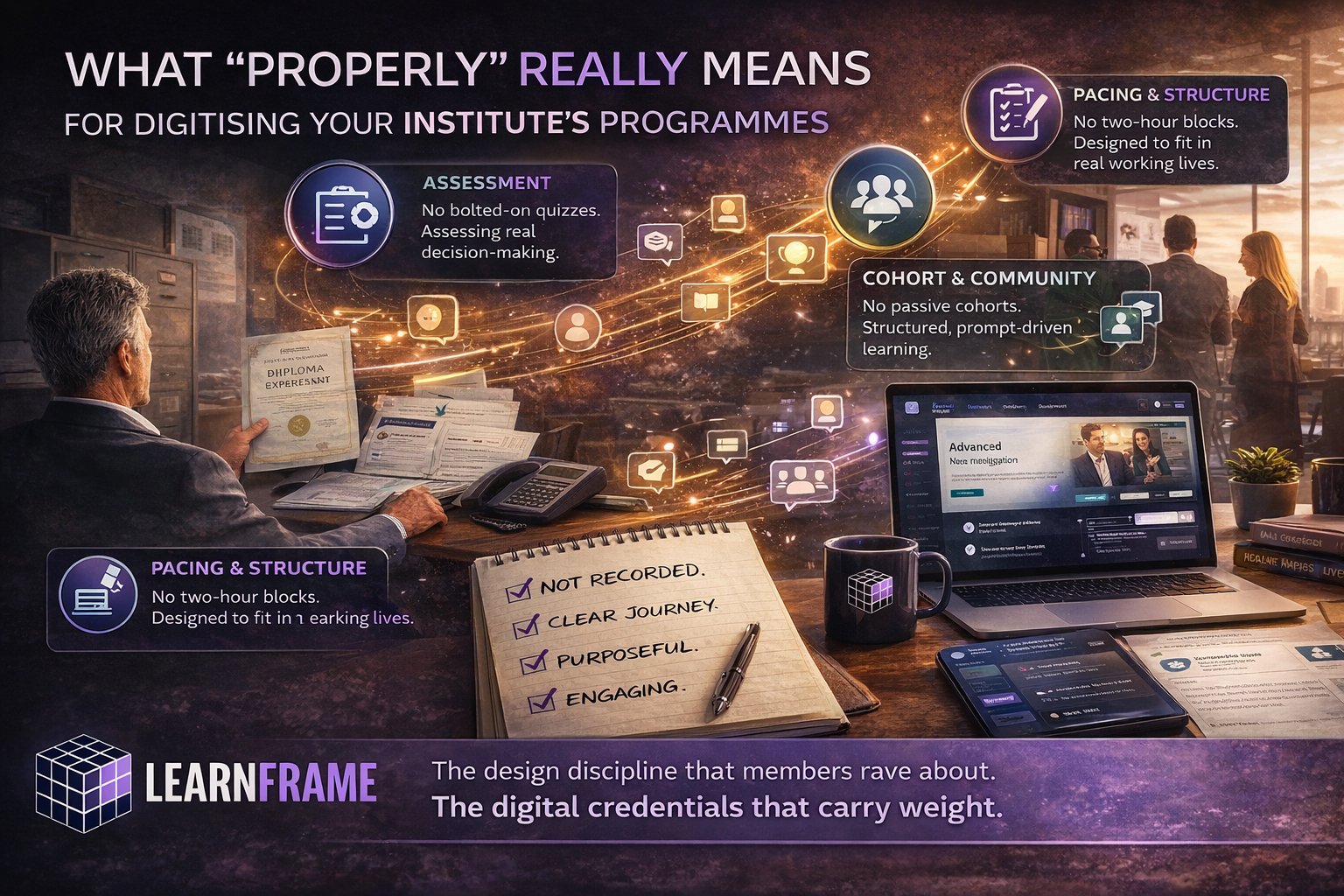

Because the difference between the institutes whose members rave about the digital version of a programme and the institutes whose members keep asking when the classroom version is coming back is not effort. Both teams are working hard. The difference is that one team has a shared definition of what "properly" means as a piece of digital design, and the other is doing their best with a definition imported from the classroom.

Here is what we mean by properly. Six dimensions. Each one is a place where the classroom default does not translate, and where the absence of a deliberate digital choice produces a programme that quietly fails to land.

One: pacing and structure

Classroom programmes are paced by the room. A two-day off-site, a six-week evening series, a Friday-afternoon module — the pacing is set by when the room is booked and how long people can sit in it.

Digital programmes inherit none of this. Members consume them in the gaps in their working week — twenty minutes on a commute, a Sunday morning hour, the half-hour before a meeting starts. A programme designed in two-hour blocks does not survive contact with that reality. Members start the block, get pulled away, lose context, and never come back.

A properly designed digital programme is built around how working professionals actually have time. Units sized to be completed in a single sitting. Clear stopping and resuming points. A rhythm that respects the working week rather than competing with it. The pacing is a piece of design, not a translation of the classroom timetable.

Two: assessment

In the classroom, assessment usually shows up at the end. A written exam, a final presentation, a panel interview. The format is well-understood and culturally accepted. In digital, the same logic produces something hollow — a multiple-choice quiz at the end of a module that members complete on autopilot and that proves nothing about whether they have actually learned anything.

Properly designed digital assessment uses the medium. Scenario-based decisions that require members to apply judgment to real situations. Artefacts they create and submit. Evidence of practice rather than recall of content. And — this is the part most institutes miss — assessment that is woven through the programme rather than bolted on at the end, so that members can see their progress against the learning outcomes as they go.

For a professional institute, this is not a minor design choice. It is the thing that determines whether the digital credential carries the same weight as the classroom one. If the assessment is weak, the credential is weak, regardless of how good the rest of the programme is.

Three: cohort and community

The conversations in the corridor at a classroom programme are often where the real learning happens. The off-the-record questions, the war stories, the comparisons of approach across firms and sectors. None of this happens by accident in a digital programme. If you do not engineer it, it does not exist.

Properly designed digital cohort experience does three things deliberately. It makes members visible to each other — they know who is on the programme, what they bring, and where they are in their careers. It facilitates structured conversation, with prompts and provocations that turn a group of strangers into a working cohort. And it produces a network effect, so that members finish the programme with relationships, not just a certificate.

Institutes have a structural advantage here that most do not exploit. Their members already share a profession, a set of standards, and often a regulatory framework. Done well, a digital cohort can produce a tighter community than the classroom version, because it lasts longer and operates across geographies. Done badly, members never realise the cohort exists.

Four: faculty presence

Classroom programmes lean heavily on the presence of practitioners — the partner who tells the war story, the regulator who explains the thinking behind the rule, the chief executive who answers questions on the record. The credibility of the programme is partly the credibility of the people in the room.

A digital programme that loses this presence loses something important. And the default response — recording faculty as talking-head video — does not solve the problem. It compresses what was a relationship into a piece of content.

Properly designed faculty presence in digital is more deliberate and, paradoxically, more intimate. Live or near-live touchpoints — masterclasses, Q&A sessions, office hours. Faculty contributions woven into the programme as engagement rather than performance. A clear, fast path for members to ask questions and get expert response. The goal is not to make the digital format feel like the classroom — it is to make faculty presence feel current, accessible, and genuinely there.

Five: member experience

The journey from enrolment to completion is invisible in the classroom because the room handles most of it. Members turn up, they are oriented in the first session, they know what is coming because the timetable is on the wall, and they finish when the final session ends.

Digital removes all of that scaffolding. If the institute does not deliberately rebuild it, members are left to navigate the programme on their own — and a meaningful percentage will simply drift away.

Properly designed member experience treats the entire arc as a designed journey. A real onboarding moment that orients members to the programme, the platform, and each other. Clear visibility of what is coming next at every stage. Proactive support — check-ins, reminders, encouragement — rather than only reactive help. And a completion moment that is genuinely recognised, not a downloaded PDF certificate that arrives without ceremony.

For an institute, the completion moment matters more than most realise. It is the point where members decide whether the credential they just earned is something they will display proudly or quietly file away.

Six: production craft

The final dimension is the one institute teams sometimes treat as a finishing touch when it is closer to a foundation. Production quality — the visual design, the audio and video, the writing, the smoothness of the platform itself — sets the floor on how seriously members take the programme.

A members' first impression of a digital programme is formed in the first ten seconds. If those seconds look like 2015, the programme is fighting an uphill battle for the rest of its run. Members compare what they see to the production values of the consumer products they use every day, not to the institute's previous digital offerings. The bar has been set elsewhere.

Properly designed production craft does not mean expensive. It means deliberate. Clean visual design that matches the institute's brand. Video and audio that are watchable on a small screen. Written materials that have been designed and edited, not just slides recycled. A platform experience that feels current. The members do not need to be impressed. They need to be confident that the institute is taking the digital format as seriously as the classroom one.

The discipline these add up to

Read across the six dimensions, what emerges is a discipline. It is not a checklist of features and it is not a technology question. It is a way of treating digital as a medium with its own rules, rather than as a thinner version of the classroom.

The institutes that adopt this discipline produce digital programmes that sit comfortably alongside their classroom equivalents — and in some cases surpass them. The institutes that do not, end up with a body of digital work that quietly underperforms and reinforces the suspicion that the classroom is "the real thing."

A resource for institute teams

To make this practical, we have built a Programme Design Audit that scores an existing classroom programme against each of these six dimensions. It is a working tool, designed for the team that would actually own a digitisation project — thirty questions, a dimension-level score, an overall readiness band, and a clear view of where the focused work needs to happen.

Alongside it sits a short Board Briefing — a four-page summary of the same framework, written for the executive or board conversation about whether and how to commit to the work.

Both are free and ungated. Available on our resources page.

Where we come in

At LearnFrame, this is the design discipline we bring to the institutes and membership bodies we work with. Three decades of digital learning experience, a strategic team in Dublin, and a development capability that delivers enterprise-quality work at a fraction of typical agency cost — a structural advantage we have built deliberately, not a compromise on quality.

If your institute is somewhere on this curve and you would value an experienced second opinion on a programme you are about to commit to, we would welcome a conversation.